Knowing your existing assets, threats and countermeasures is a necessary step in establishing a starting point to begin prioritising cyber risk management activities. Indeed, when driving the improvement of the security posture in an organisation, security leaders often begin with getting a view of the effectiveness of security controls.

A common approach is to perform a security assessment that involves interviewing stakeholders and reviewing policies in line with a security framework (e.g. NIST CSF).

A report is then produced presenting the current state and highlighting the gaps. It can then be used to gain wider leadership support for a remediation programme, justifying the investment for security uplift initiatives. I wrote a number of these reports myself while working as a consultant and also internally in the first few weeks of being a CISO.

These reports have a lot of merits but they also have limitations. They are, by definition, point-in-time: the document is out of date the day after it’s produced, or even sooner. The threat landscape has already shifted, state of assets and controls changed and business context and priorities are no longer the same.

Such reports also require a lot of effort and therefore budget to produce. External consultants are often called upon, burning through countless hours talking to people and reviewing stacks of documentation. I’m not trying to diminish the usefulness of such engagements and, as mentioned above, they have their place in gaining an independent perspective, a chance to benchmark yourself against the industry peers, secure senior leadership support and much more. They, however, also tend to rely on at least some degree of ‘expert judgment’ which may lead to subjectivity and bias. The temptation to recommend an uplift of your EDR capability by an EDR vendor conducting such an assessment might be too great to resist, for example. Due to the length and cost of such engagements, they tend to be performed rather infrequently, perhaps when a budget increase needs to be justified or a decision on a particular direction made.

Now we have an assessment that is point-in-time, expensive, infrequent, manual, time consuming to perform and potentially biased. Assuming your security controls have been selected and implemented to address key risks, how do you observe their actual state in an ongoing manner? There’s got to be a better way than waiting for the next security review. And there is – it’s continuous security monitoring.

Imagine a world where you can get an ongoing, automated and data-driven view of your security posture. You can look at a dashboard with a near real time view of coverage and effectiveness status of your controls across the entire organisation. You also have an ability to drill down from high-level overview into system-level metrics, slice and dice the data and tailor the presentation to different audiences based on their needs. Not only would this provide the much needed visibility and situational awareness, support compliance with policies, laws and regulations, it can also complement the point-in-time security assessments I described above and aid the risk-based decision-making and response as the landscape continuously evolves.

Public cloud providers like Azure, GCP and AWS are leading the way with continuous compliance capabilities for their environments. I previously wrote about tools like AWS Config and Security Hub that provide continuous visibility into your cloud security posture. Such detection mechanisms should be augmented with preventative (e.g. AWS Service Control Policies) and, where possible, corrective (e.g. automated vulnerability remediation) controls. The approach that caters for both cloud and on-prem assets, however, renders the use of cloud native tooling problematic. It might work for a small cloud-first startup, but would be challenging to implement in a complex enterprise environment.

The NISTIR 7756 publication defines continuous security monitoring as ‘a risk management approach to cybersecurity that maintains a picture of an organisation’s security posture, provides visibility into assets, leverages use of automated data feeds, monitors effectiveness of security controls, and enables prioritisation of remedies”.

The essential characteristics for continuous monitoring based on this are as follows:

- Maintains a picture of an organisation’s security posture

- Measures security posture

- Identifies deviations from expected results

- Provides visibility into assets

- Leverages automated data feeds

- Monitors continued effectiveness of security controls

- Enables prioritisation of remedies

- Informs automated or human-assisted implementation of remedies

The good news is technological components to support such an approach already exist, but it’s not a single tool, regardless of what vendors might tell you: it’s hardly a technology challenge. Instead, the aim is to aggregate data and telemetry from a diverse set of existing solutions, such as malware detection and vulnerability management and adopt a format that allows you to form a view of control status based on these data points. Interoperability and normalisation become important aspects to consider.

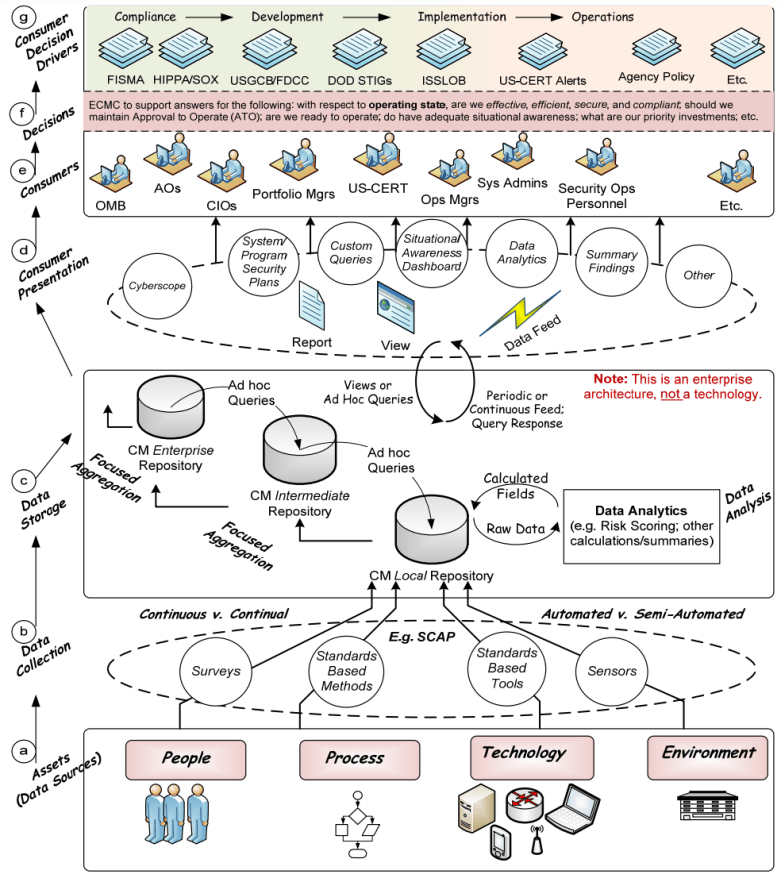

The diagram below outlines a high-level enterprise architecture for continuous monitoring. Following it bottom-up, we can trace data collection from various sources, including people processes and technology, through to the storage and presentation layers which in turn help drive timely and informed decision-making.

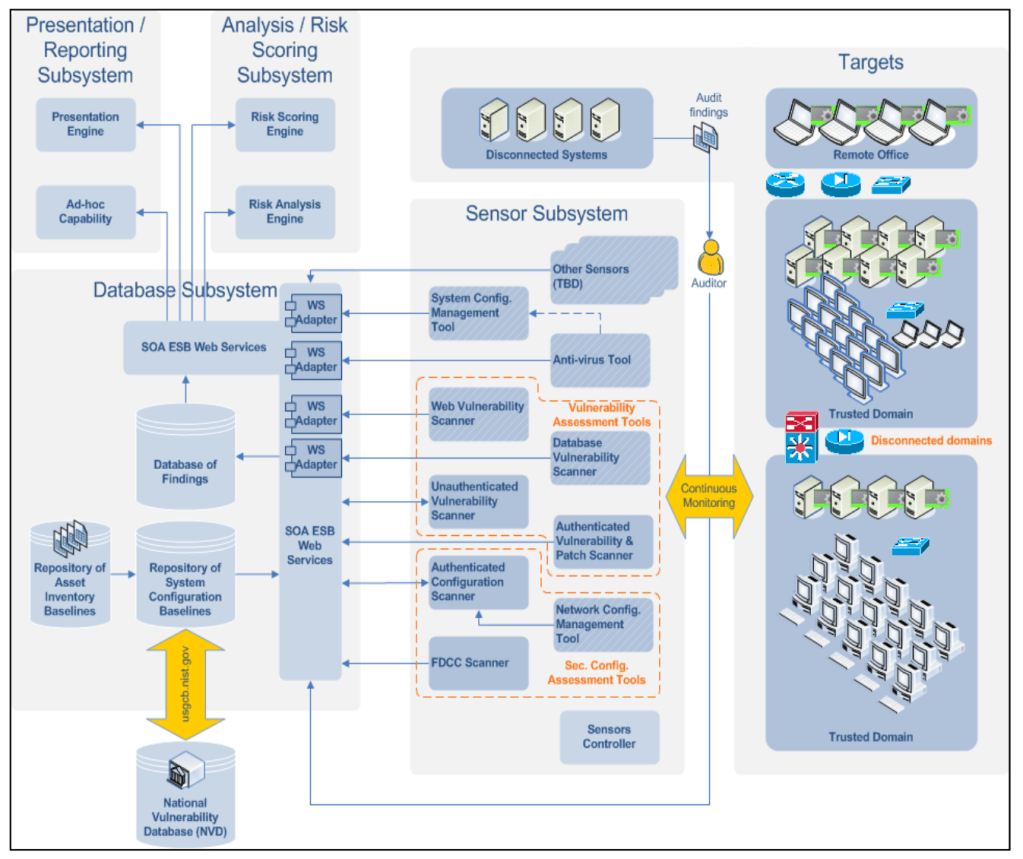

The diagram at the top of this article supports this view and depicts the contextual product-agnostic representation of subsystems, using vulnerability and configuration assessment as an example.

Providing an up-to-date view of security risks and controls through automated data collection, analysis and reporting is a complex undertaking requiring cross-functional collaboration. When implementing this in practice, I recommend going beyond the security team and leveraging wider enterprise capabilities. Sure, you can consolidate the data into your SIEM, but your organisation might have alternative data lake, data analytics and dashboard platforms that you might also be able to leverage to your advantage.

When automatically pulling data from multiple systems, it is important to harmonise the output from various vendor tools. I recommend using open specifications such as Security Content Automation Protocol (SCAP) and adopt policy-as-code expressions where possible. This can also help integrate with other capabilities within the company such as service desk, configuration management and more.

Data ingestion and monitoring frequency don’t have to be the same across all the metrics, they can also change over time based on the wider context (e.g. reporting requirements or threat and vulnerability information), asset criticality, capability maturity and risk. Similar principles can be used to initiate event-driven assessments, for example as a result of an incident or a significant change to the environment.

As described in NIST SP 800-137, automation doesn’t just have the potential to speed things up and reduce costs, it can also help detect patterns that a human may miss, especially when analysing huge volumes of data. For instance, when verifying configuration settings on network devices.

Not everything, however, can be easily automated. Although, you should initially focus on delivering a narrowly scoped prototype you can iterate on, accept that there will be elements of your security programme that will continue to be monitored manually.

After tackling initial low hanging fruit to demonstrate quick wins, the objective is to mature and expand the continuous monitoring capability. It helps to regularly review your metrics and thresholds in the context of your company’s risk appetite to ensure they are still relevant.

You can also define a set of requirements for new projects across the enterprise, so freshly deployed applications and infrastructure are onboarded onto your automated data collection pipeline, saving you the retrospective effort. At the same time, a known good state of this environment can be defined and captured, so any potential configuration drift or deviation can be detected in a timely manner. In other words, an Authorisation to Operate (ATO) can be granted and maintained as part of your continuous monitoring strategy.

2 Comments