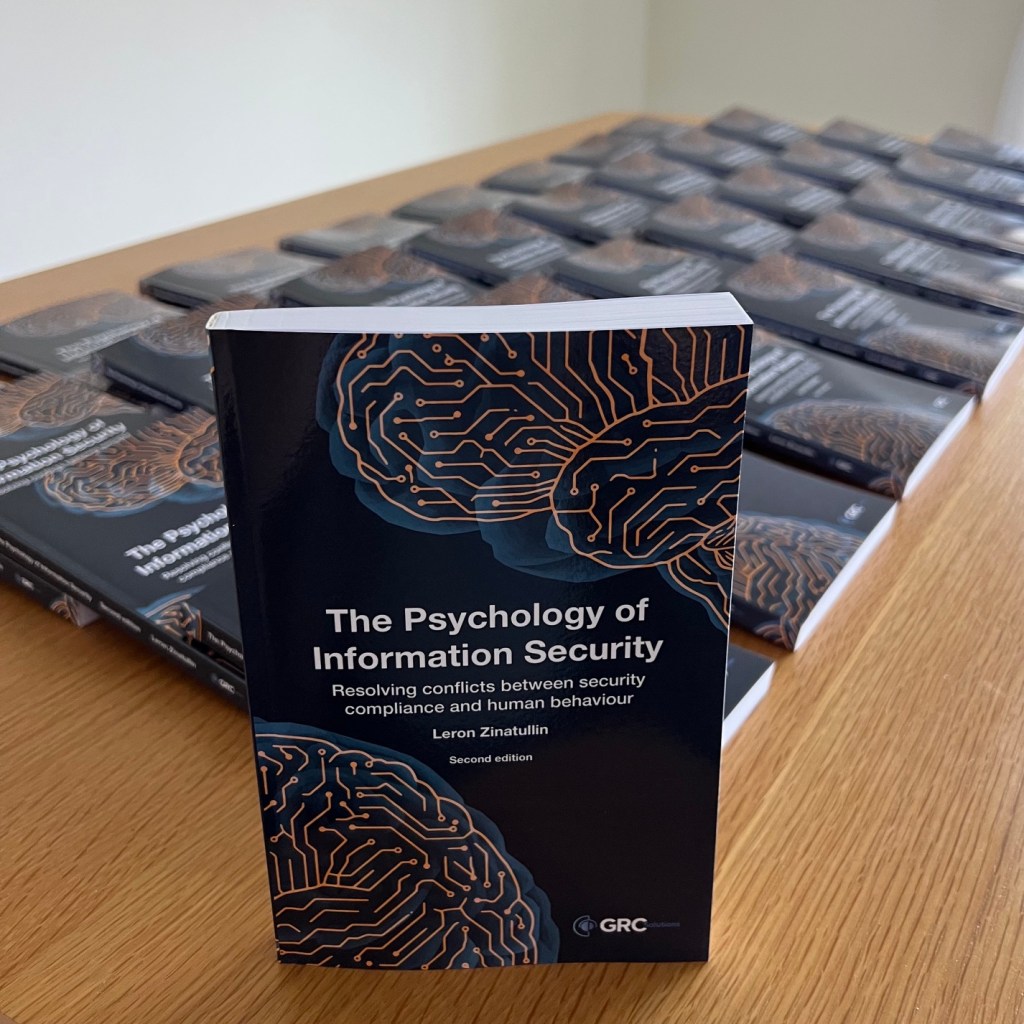

I’m super proud to have written this book. It’s the much improved second edition – and I can’t wait to hear what you think about it.

Please leave an Amazon review if you can – this really helps beat the algorithm, and is much appreciated!

A practical approach

A day of sessions on AI in the SOC, OT resilience, fragmenting regulation and machine-speed response.

We build systems that assume the human in the loop has steady attention and reliable judgement. Neither is true, and it is getting less true. Every alert, prompt, exception, and “are you sure?” draws from the same depleting account. By afternoon, the analyst approving a transfer, the engineer waving through a change and the executive clicking an MFA push are all running on the same low battery.

AI does not solve this. It compresses the timeline and raises the stakes of each remaining human decision. The questions we still hand to people – is this normal? do I trust this? should I escalate? – are exactly the ones tiredness destroys first.

You cannot train your way out of the fact that attention runs out. It is a design problem. That’s precisely what I discuss in The Psychology of Information Security.

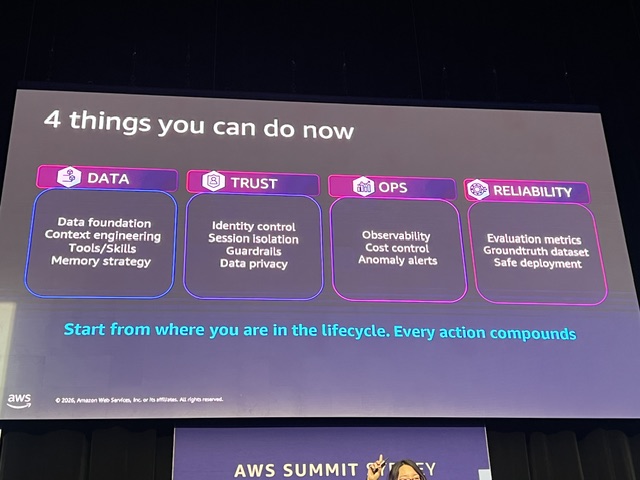

There is a shift happening in how the industry talks about agentic AI security. A year ago the conversation was speculative – what might go wrong, what we might do about it. Now it is specific. The platforms, primitives and patterns for operating agents safely exist as named things you can point at on a slide. The vocabulary is converging across vendors. The reference architectures are documented.

In this blog I explore the themes that mattered most, and what they mean for security teams.

One of the UK’s leading research-intensive universities has selected the second edition of The Psychology of Information Security to be included in their flagship Information Security programme as part of their ongoing collaboration with industry professionals.

“We incorporated The Psychology of Information Security into our MSc in Information Security, where it has become part of the essential reading for the Human Aspects of Security and Privacy module. Over time, it has proven to be a valuable anchor text within the curriculum, helping to frame discussions around the human dimensions of cybersecurity in a structured and coherent way.

Students consistently appreciate the perspectives it offers, particularly its ability to bridge academic research with real-world industry practice. It not only provides a clear roadmap through a complex and wide-ranging topic, but also encourages a broad understanding of the psychological principles underpinning everyday security challenges.”

Dr Konstantinos Mersinas, PhD, CISSP

Associate Professor, Information Security Group, Royal Holloway, University of London

Visiting Professor, Keio University Tokyo, Japan 特別 招聘 准教授 慶応 大学 東京 日本

Director of Distance Learning MSc Programme in Information Security

Vice Chair, INCS-CoE (International Cyber Security Center of Excellence)

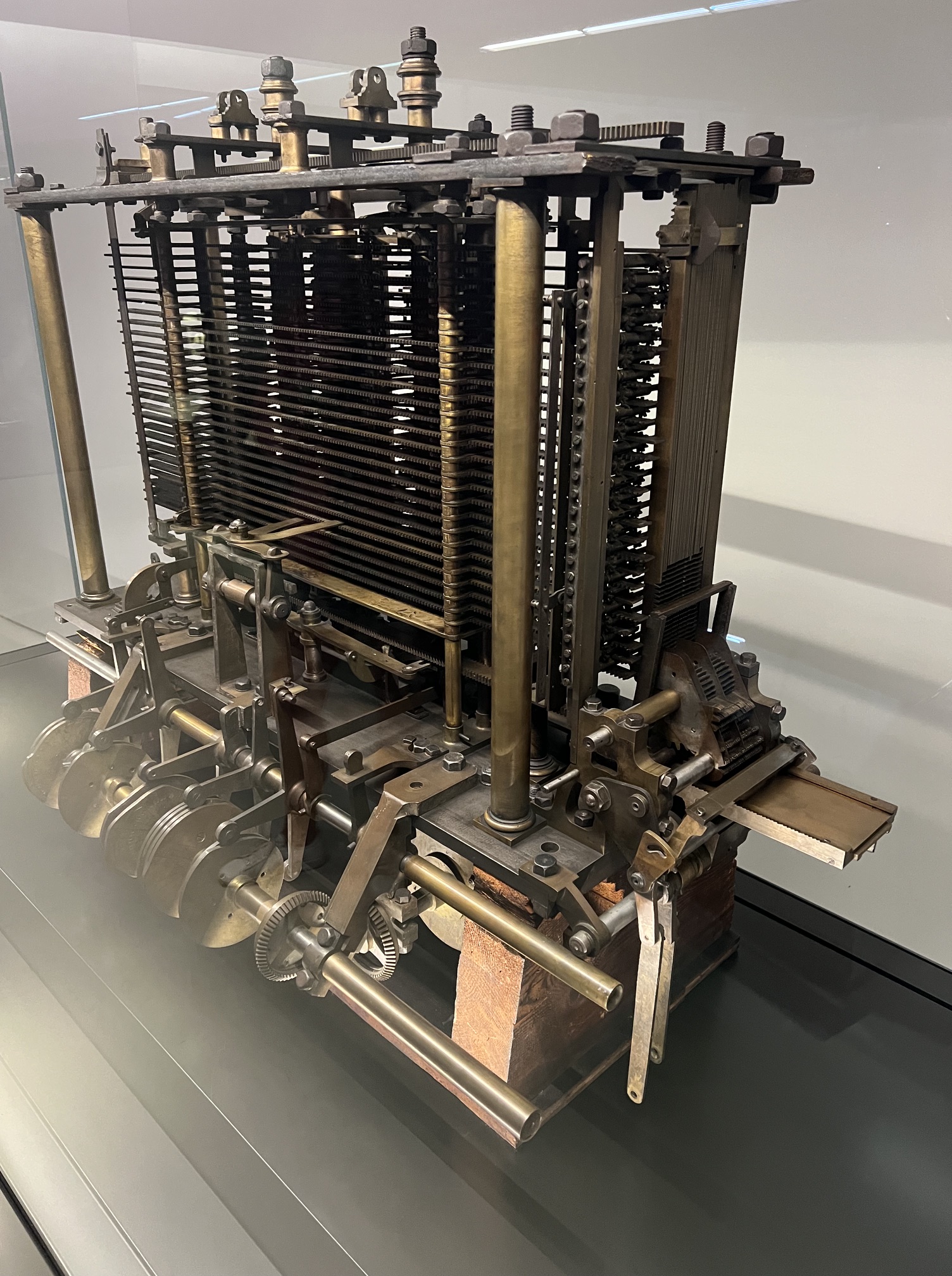

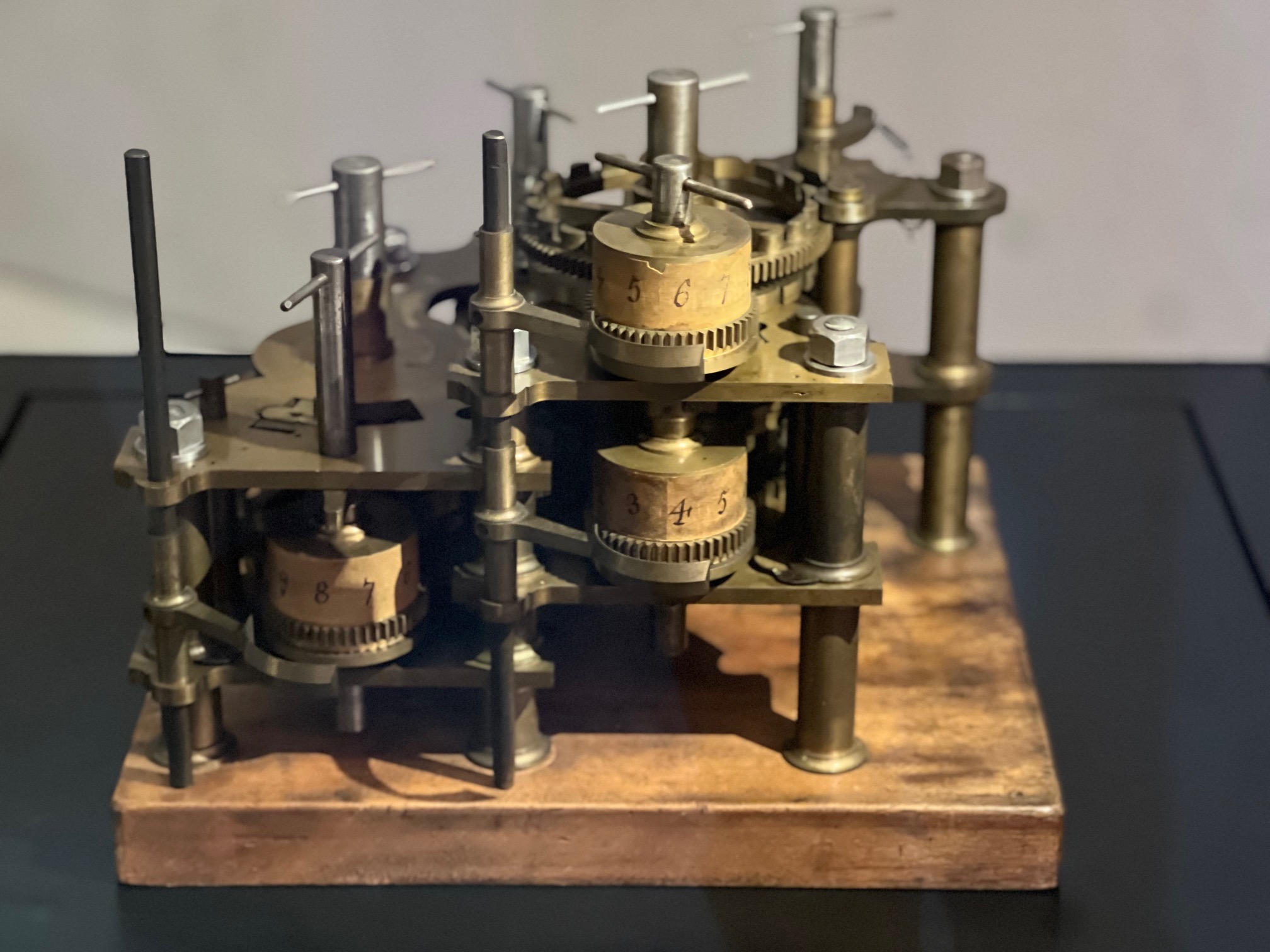

I went to the Science Museum in London last week to see Charles Babbage’s Difference Engine No. 2 – eight thousand parts of brass and steel, working exactly as he drew it in the 1840s.

Babbage’s problem was banal: the mathematical tables of his era, used for navigation and engineering, were riddled with errors that humans kept introducing. His solution was clever. Many useful functions can be approximated by polynomials, and polynomials can be evaluated with nothing but repeated addition. Build a machine that adds reliably, and the errors disappear.

He never finished it. Funding ran out, his engineer quit, and his attention had already moved on to the Analytical Engine – a programmable, general-purpose machine with separate memory and processing units. He didn’t build that either. The contraption I was watching was assembled by the Science Museum between 1985 and 2002, mostly to settle whether Babbage’s drawings were buildable with Victorian engineering tolerances. They were. He was a hundred years early.

A lot of modern computer science is already implicit in the design. The split between data and operation we attribute to von Neumann. Punched-card input, borrowed from the Jacquard loom, that survived into the FORTRAN era. Ada Lovelace, writing notes on the unbuilt Analytical Engine, asking what it would mean for a machine to compute in general – a question we are still answering.

But the real legacy is the bet underneath all of it: that thinking, or some of it, is a physical process you can build out of parts. Every model we train and every program we write assumes Babbage was right.

Today, organisations are caught between two opposing forces. On one side is the drive for operational efficiency through digital transformation and AI adoption. On the other is an asymmetric cyber threat landscape.

As adversaries leverage AI to increase the scale and sophistication of attacks overwhelming already stretched cyber teams, defenders must do the same by using AI to strengthen security.

The traditional security model is reactive. When a threat is detected, a human must review, validate and remediate. In the time it takes an analyst to finish their first coffee, an AI-driven adversary can exfiltrate sensitive data.

For organisations that depend on customer trust and regulatory compliance, “responding as fast as we can” is no longer within risk appetite. Humans cannot scale to match the speed of automated code.

AI is becoming central to the future of cyber defence. While much of the industry focuses on automating security operations triage, the true power of AI lies in automating complex, proactive security and compliance functions that previously required thousands of human hours.

Excited that my book just hit #1 on Amazon’s bestseller list. Thank you to everyone who read, recommended, reviewed and supported this project – I couldn’t have done it without you. If you’ve read it, I’d love to hear what resonated most.

If you haven’t read it – it’s currently on offer in some Amazon stores, so get your 23% discount while you can!

And yes – it’s technically #1 in the very specific category, which is slightly amusing… I suspect it’s a hit with late-night cyber security enthusiasts rather than beach readers!

It was good to moderate a discussion on bridging the gap between strategy and execution. Great, candid conversation and plenty I’ll take back to the office.

Key takeaways:

☑️ Buy-in happens when you translate risk into business impact, work across functions and deliver early, visible wins.

☑️ Common pitfall: a glossy PowerPoint deck with no delivery plan. Convert vision into smaller, time-boxed outcomes with clear owners.

☑️ What makes the difference: realistic roadmaps, measurable OKRs (outcomes not activity), empowered teams and a steady governance cadence that removes blockers.

Thanks to the panelists and everyone in the audience who challenged orthodoxies – I learned as much as I hope I gave.

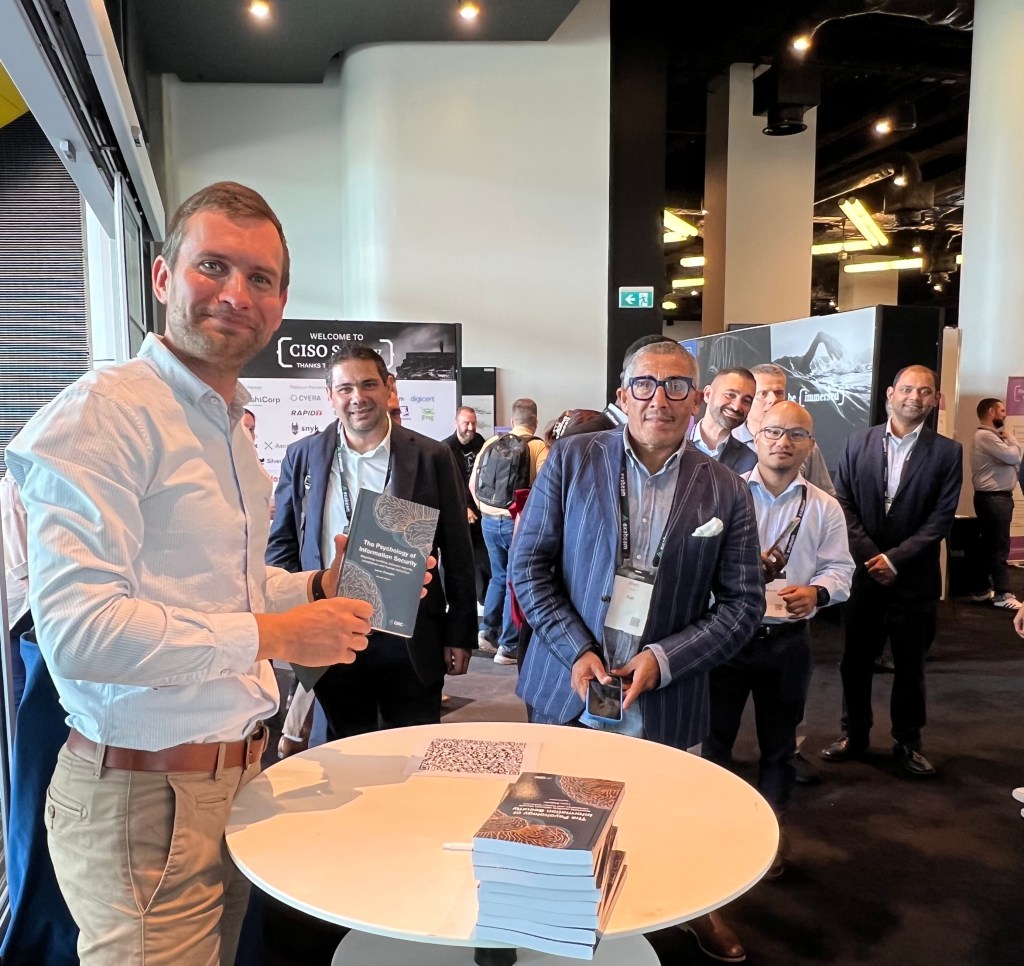

Thank you to everyone who stopped by the book signing. It was a pleasure to meet readers and hear your thoughts. If you missed it, you can still get the book on Amazon:

https://a.co/d/0fai5zyh

Security failures are rarely a technology problem alone. They’re socio-technical failures: mismatches between how controls are designed and how people actually work under pressure. If you want resilient organisations, start by redesigning security so it fits human cognition, incentives and workflows. Then measure and improve it.

Apply simple behavioural-science tools to reduce errors and increase adoption:

A reporting culture requires psychological safety.

Stop counting clicks and start tracking signals that show cultural change and risk reduction:

Always interpret in context: rising near-miss reports with falling latency can be positive (visibility improving). Review volume and type alongside latency before deciding.

If rate rises sharply with no matching incident reduction, validate whether confusion is driving questions (update docs) or whether new features need security approvals (streamline process).

An increase may signal stronger capability and perceived efficacy of security actions. While a decrease indicates skills gaps, tooling or access friction or perception that actions don’t lead to change.

Metrics should prompt decisions (e.g., simplify guidance if dwell time on key security pages is low, fund an automated patching project if mean time to remediate is unacceptable), not decorate slide decks.

Treat culture change like product development: hypothesis → experiment → measure → adjust. Run small pilots (one business unit, one workflow), measure impact on behaviour and operational outcomes, then scale the successful patterns.

When security becomes the way people naturally work, supported by defaults, fast safe paths and a culture that rewards reporting and improvement, it stops being an obstacle and becomes an enabler. That’s the real return on investment: fewer crises, faster recovery and the confidence to innovate securely.

If you’d like to learn more, check out the second edition of The Psychology of Information Security for more practical guidance on building a positive security culture.

As AI adoption accelerates, leaders face the challenge of setting clear boundaries, not only around what AI should and shouldn’t do, but also around who holds responsibility for its oversight.

It was great to share my thoughts and answer audience questions during this panel discussion.

Governance must be cross-functional: security, risk, data and the business share accountability. I also reinforced the importance of guardrails, particularly forAgentic AI: automate low-risk work, but keep humans in the loop for decisions that affect safety, rights or reputation. Classify models and agents by impact and apply controls accordingly.