Interview with Yousef Syed – Enterprise Security Architect at Bayvision Limited

Let’s start with the basics. How did you start your journey in information security?

Back in 1998 I graduated with a BSc in Computer Science and chose to focus on Object Oriented Analysis & Design and Java. In September 1999 I started contracting in the telecom industry in Netherlands. It was a unique, pre-dotcom-crash situation. A brand new multi-billion-dollar joint venture between two telecom groups – loads of money, starting from scratch with next to no infrastructure – really brilliant place for a relatively new graduate to come in to. A start-up with loads of money! While, officially regarded as a “Web Developer”, since they had no developer PCs, no servers, no DEV/TEST/Prod, no source-control, no standards, no policies, no DMZ; I ended up being involved in everything – and it was great! Set up policies, set up development standards, and specifying and ordering the PCs and software for the developers; specifying the servers for the website/database, and then being in meetings with the networking people to define what the firewall rules were going to be, and what we needed to do. Basically, doing everything!

A year later, I began a contract in Munich working as a secure Java developer using the new JAAS (Java Authentication and Authorisation Service) API in an Agile/Extreme programming team. So back in 2000 I was exposed to security and ever since I’ve kind of been in-and-out, either just doing application development or some other branch of security.

Then, in 2005 while I was working permanently with Accenture and I was exposed to identity management as a specific field –Thor Xellerate (later to become Oracle Identity Manager). Working on various client sites, I found identity management very interesting because it cut across every part of the business. Everybody in the business needs access to multiple, different systems, and the IDM provisions and de-provisions the users with the appropriate level of access, for all users.

Very interesting. What are you working at the moment?

In January, I was contacted by a cloud-based accountancy firm regarding a cyber-security voucher that the government was funding to encourage Small and Medium sized firms and Sole-traders to improve their cyber security stance.

It was one of the more enjoyable projects I’ve been involved in. Compared to working in a large faceless organisation, or government department, where you might disappear in amongst thousands of other small cogs, and your influences is small; here, you get to make an impact. When you are working in a smaller organisation of about 50 people or up to 200 people; your influence and impact is clear to see and is appreciated by the client. More over, since I’m communicating directly with the business leaders, the security serves the business needs instead of just an individual department’s needs.

Why do you think this is important? What is the main difference between working for a small company compared to a big one?

I believe you are going to understand their business better, so you can give genuinely relevant advice. You don’t need to worry about keeping your consultancy/employer or specific business-unit happy. You just need to focus on the business, and on giving them the best advice for them.

I hope to keep doing this for the foreseeable future, because it is easy to get bored of working in big companies. I mean, big organisations are nice because you get exposed to good technology, complex problems and huge projects etc., but as far as getting return for your work where you actually see results there and then; then you can’t beat the immediacy of an SME. You don’t need permission/sign-off from a dozen different stakeholders before you update that policy document. You change that policy or that you give them a piece of advice that has changed their focus to create a secure coding development platform; how to improve testing on that; you’ve given them access to resources they didn’t even know about, and you’ve given them new ideas and new perspectives.

And you’ve also shown them how they can actually improve themselves. So if they go for ISO 27001, that might be a differentiator between themselves and their competitors, and it’s also something that they can tell their customers: “We value your data privacy seriously, we have these standards in place, and we’re looking after you.”

When you work in security, you take a lot of things for granted. But then you go to some small or medium-sized companies, and they’ve been so focused on building their small business and delivering new functions, security is way back in their list of priorities. Now you get to raise that up and show them that it not only benefits them on the compliance side of things, but that the benefit also lies in their knowing where their data is, who has access to their data, they know when they have access to it. And you can put all sorts of different levels of controls onto things and give them a far greater peace of mind about how they are dealing with things internally to their company and how they are dealing with things with regards to their product. So delivering this cyber-security voucher to SMEs is something that I’m pursue with a lot more zeal at the moment, because I never knew about it before, and I know it can make a big difference to all of them. £5K is pretty meaningless to your average Fortune 500 company, but to an SME it is a pretty big deal.

What in your opinion is the main obstacle in implementing a similar approach in large corporations?

One of the problems with large corporations, and the same thing in government, is that each separate individual department has a budget. And they need to work to that budget, and they need to ensure that they are doing enough so that they get the same budget or more next year. And it gives a very narrow view to what they are doing. For me the best security (and IT investment in general) is when it is applied at the enterprise-level, across all of the various business units, and considers how we can make all of these people work well together. There is no point in having a really strong security in your finance department, when another department isn’t even talking to them, and they are doing similar work but on different platforms. On the one hand, you are wasting money, because they are duplicating work, they are duplicating data, they are duplicating the risks involved: in fact they are not even duplicating, they are making the risks much wider, because they may not be tracking where the data is going, and on another platform; its going all over the place.

[So if one department has Oracle DB, another is running Sybase and another small team has MS Access; a) You have the cost of the separate platforms; b) separate licensing for same task; c) you need to harden each platform separately; d) you need define a mechanism to share the data across the systems to maintain the integrity of the data; e) you need to support and patch separate systems etc. Conversely, the enterprise could have defined a single Database platform that all departments to use thus saving a world of pain.]

And while the ISO 27001 and various other standards out there will give you a kind of check-box compliance, “yes, we did this, we did this,” it doesn’t give you the kind of thing to say, “I feel comfortable about this.” Yes, you might feel comfortable about it if the legal department comes and questions you about it, but do you feel comfortable enough about it to be able to say that we have done a good job here, and we have delivered something to the client that actually works for them?

What about the security culture?

Yes, one of the things that I kept on stressing to one of my clients was: “you have a culture here that works for you. You have a very nice environment because everybody knows what’s going on here. If you are a developer, you know everybody in marketing, you know everybody in sales, and everybody knows you. And you have very free-flowing information going on, and it helps a great deal in how you operate. So when you are adding security controls, you don’t want to break what’s already working. You want to make sure it becomes better”.

Can security awareness training help to resolve this?

There is a problem with awareness training and educating users. If you are like me, I come from a technical background, you become very narrow-minded thinking: well I find technology very easy, why can’t you work it out? “Well, because I work in marketing!”

I don’t know anything about marketing so why should they know anything about technology?

There is a certain level of arrogance that we in technology developed about other people: in fact, there is a massive amount of arrogance, you come up with all kinds of deprecating or dismissive terms like “problem exists between keyboard and chair (PEBKAC)” or other phrases, just because they just don’t understand. Why don’t they understand? Because they are qualified for something completely different, something which you don’t understand.

So one should stop being so arrogant, step into their shoes, and understand them or try to find a way to translate what you do into terms that they will understand.

So what is the solution?

For me, I always go back to real world examples. Most people understand security from the real world. We are used to carrying five or six different keys for different things. But on the Internet, people only use one key; they only use one password. And they use it everywhere. So when it gets lost, people have access to everything that they own.

In the real world, I have a separate key for my car, a separate key for my home, a separate key for the main door vs. a separate key for my own apartment, and we are used to this kind of thing. But trying to explain to a user why it is that we use a password manager, we have to explain it to them in terms that they will actually understand, and actually take time for them to join the dots within their brain. “So that’s why I should have a different password. That’s why I should make my password really difficult.” Until they put two and two together, they are going to go for whatever is easiest.

So there are a lot of places where not only security people, but technology people in general need to learn to meet the end user halfway and make security transparent and ubiquitous: make security a layer that they don’t necessarily need to think about so much. But from our side, we need to make our code secure, we need to make our cloud system interactions secure, and we need to make our data policies and the implementation of our data policies secure.

Can you elaborate on security policies? Do you see any problems with them?

There’s no point in writing something in your policy that everyone is ignoring. There was a company a few years ago with the policy that nobody was allowed to use Microsoft Messenger. Everyone was using Microsoft Messenger! Your policy says this, and everyone is doing something different. So why is it written in your policy? Either train your people to not use it, and give them a valid, relevant, genuine alternative that they can use, or don’t put it in your policy.

And there are loads of things in the policy documents to please the auditors and to please the compliance team. But that is not how you do security. It in fact makes security worse because it gives the illusion to management that all these things are in place, when all the while the users are bypassing or ignoring it.

How security professionals can help the management in this case?

You need to give them the tools that help. If they are carrying client-data upon which they need to write reports, they need to do some data classification, state who should have access to it and how valuable things are. So if you have classified data, how should you encrypt it, how should you store it, how should you transfer it. So, for argument’s sake, buy a set of encrypted USB keys. If you know that people are working off of their laptops, get something like TrueCrypt or something else that encrypts their laptop, so that their laptop is encrypted if their laptop goes missing or something, you’re safe. Institute Two-Factor-Authentication. And, educate the user: you make sure they understand.

Are there any problems with implementation of such solutions?

Big corporations get these things, they throw loads of money at it, but they don’t look at it from the perspective of how does a business actually use this.

So one of the things which I was saying was that yes you can buy a really cool firewall and IPS system, but you can also do a simple hardening of your database, of your OS, of your application server, close down all of the open ports, close down all of the services that shouldn’t be on, and lets do some monitoring on some user behaviour, on how people are accessing your system. That will give you, for much cheaper, a whole load of control and peace of mind.

What the possible solution might be then?

From the technology and security side, you need to be aware of the business, and what are the drivers of this business, where do they make their money, where is the “data gold”, and what do they need to protect, and how they are going to protect that, and remain operational. You’ve got data which is very valuable to the company because it’s being used. If they can’t use that data, it’s worthless to them. So if you lock it down too much, or you prevent it from moving around to certain people, then you’re preventing the company from doing business. So until you actually understand that, you can’t put in the relevant controls to allow them to use their data and have a level of security.

You’re never going to be 100% secure, so trying to dream that you are going to be 100% secure is a waste of time, trying to do it by way of fear and scaring your client into doing things is a completely wrong way of doing things, because when you are in a state of fear, your judgment is so far off the mark, that it’s ridiculous. Whereas in the case of “I understand what I’m doing over here. Yes, there are some dangers in this. But we understand it.“

Can you give an example?

It is all about risk management – don’t be afraid of them; simply understand them and manage accordingly.

I’m a snowboarder, and last winter I did an avalanche skills training certification course. The way they manage risk is very similar to how we in security do many things (In many respects they are better because lives depend on it). They have to look at a lot of different things that can trigger an avalanche –current weather conditions, weather over the preceding weeks, terrain, different types of snow; which places you are in danger of being in an avalanche, and there are various trigger points and safety concerns (yes, it gets complicated). You don’t live in fear of avalanches because you saw something in some crappy disaster movie. Instead you live in awareness of it and manage your safety. It’s called awareness training, as opposed to a “you’ll magically be safe from an avalanche by doing this.” It’s a case of saying: right, these are different factors that can trigger an avalanche and these are different things that can make you safer from an avalanche.

So if you have had a lot of snowfall in the preceding days followed by a rapid thaw, then it is very likely that some areas on the mountains will have avalanches. So under those circumstances, you don’t go out into very steep slopes, you stay on the slopes that are shallower, or in the treeline. Yes, you may not have as much fun, but you live to play another day.

Before you go out, there are a few things that you are supposed to do. You’re supposed to notify people that this the zone that we are going to, you define a leader of the group, you take all the various precautions that you have all the avalanche transceivers, probes and shovels, and all these kind of things so that, if the worst happens, you are prepared to dig someone out, and that kind of stuff.

Are there any issues applying the same principles to security?

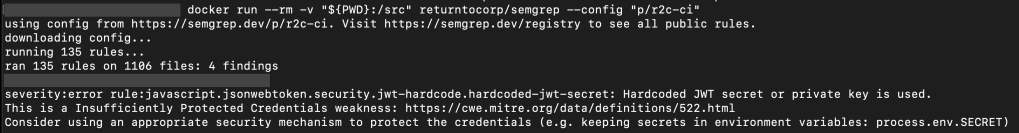

When you go to have your tyres changed, you don’t need to tell the mechanic to make sure to pump up the tires to the correct pressure. They do it automatically. But for us in IT, we need to stipulate this bunch of standard documents and requirements, and we have this non-functional sets that put these standards in place, “you will make sure that you are using this framework” to prevent SQLi attacks. People should be doing this automatically in our industry (it should be part of our quality process), but we don’t do it.

And there is a lot on our side to blame, because we don’t communicate properly, we don’t talk to the right people. And we also have a tendency to think: I told you once, how come you haven’t changed it?

We want it instantly, or at the latest, tomorrow. No, it’s going to take them time to learn, it’s going to take them time to step up their game to the correct level. And you need to be appreciative of this.

What is your approach?

There’s no one magic bullet that will solve this problem, since it is spread across so many systems and every business is different.

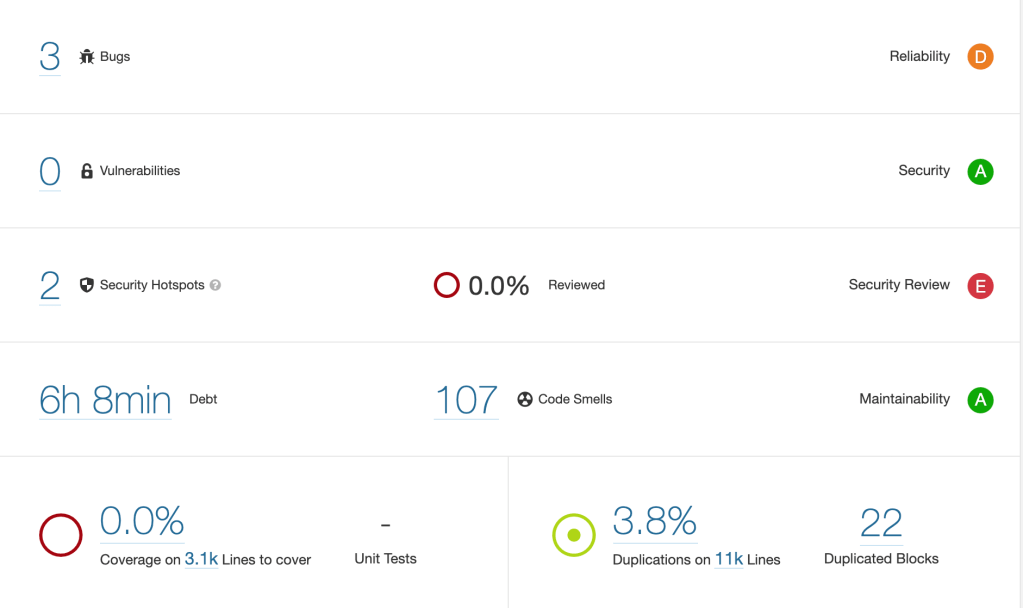

For a previous client, following initial meetings, I setup multiple security roadmaps for them in the three areas that we chose to focus on: business continuity planning; software development and data privacy.

How was this to be achieved? What steps must we take in the next week, month, quarter and where do we expect to be in six months from now. The steps we take must be measureable to some degree. This allowed us to apply a maturity model to it.

It involved some technology, some education, and a lot of communication across business domains and teams to ensure we were serving the business. It also involved the flexibility to acknowledge what isn’t working and change accordingly.

So we have a way of setting these things up so that we can track how well are we doing and where we are, and then you’ve got the ways for them, for the technical team to give feedback back to management, to say that “we’ve added this, and this has given us this additional benefit”.

Thank you Yousef. A few final words of wisdom, please.

You need to be honest with your customer. Sometimes they are not going to listen and you are going to have to do what they want you to do, and that’s part of the business. But you need to understand what the business is, and not just the department that you are being called into. You need to explore what is going on at an enterprise level.