Product security is more than running code scanning tools and facilitating pentests. Yet that’s what many security teams focus on. Secure coding is not a standalone discipline, it’s about developing systems that are safe. It starts with organisational culture, embedding the right behaviours and building on existing code quality practices.

Test-driven development, and test-driven security in particular, is a good way to define a set of requirements and checks before development begins. It’s a shift in mindset for some teams, but well worth giving it a go. Writing code to pass a set of predefined tests is one of the best ways to build-in a security baseline from the start.

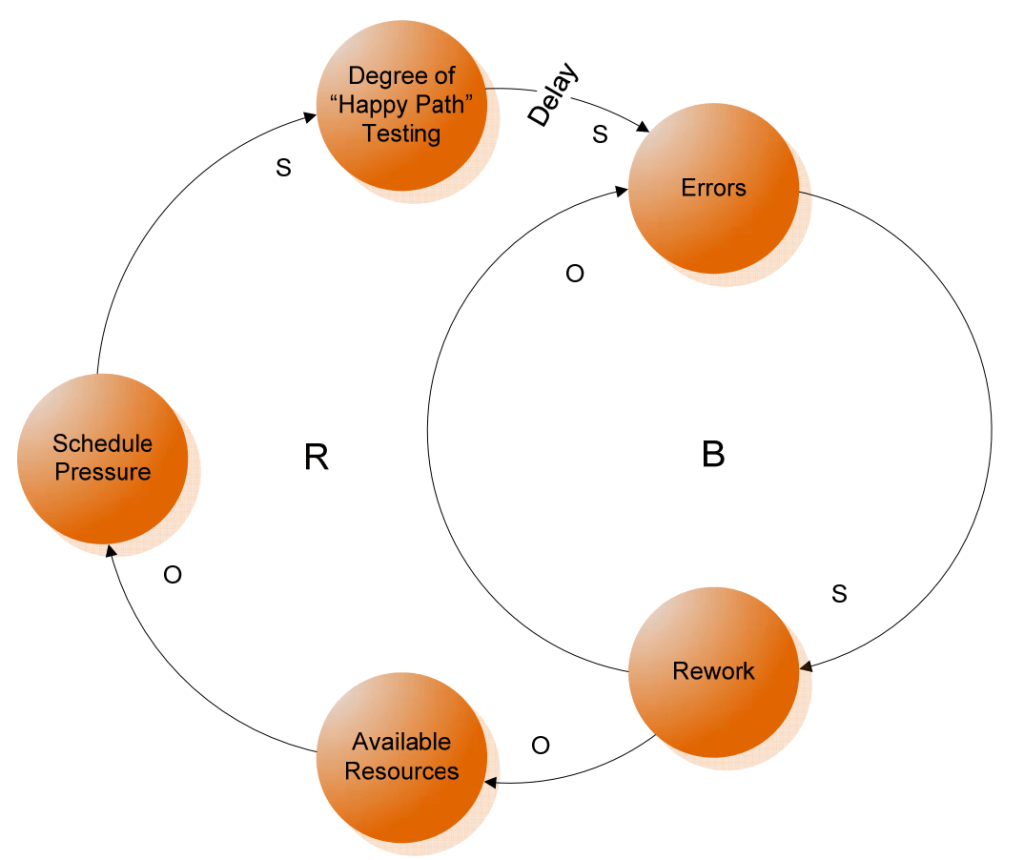

The way tests are written matters a great deal, however. Tests should simulate real-world conditions and not only verify that the required functionality is in place. Such ‘happy path’ testing is not able to simulate normal user interaction with the live system and therefore doesn’t allow for understanding of errors and their consequences to system behaviour.

What should we test for? Many would look to OWASP Top 10 for common security vulnerabilities but I think their Application Security Verification Standards (ASVS) is a better starting point. It’s a comprehensive document that’s written by developers for developers and it gives practical suggestions on how to mitigate common security vulnerabilities in a form of testable statements.

Think of this standard as a checklist for application security. It has three levels depending on the functionality you are developing. Level 1 is already better than OWASP Top 10 but still insufficient for secure applications. Level 2 is something you should strive towards, with Level 3 reserved for critical systems.

This checklist can be automated and form part of your acceptance criteria or definition of ‘done’.

Some professionals might dislike checklists but it’s worth remembering that most safety-critical industries use them, and for a good reason: many checklists have been developed as a result of tragic incidents.

As Matthew Syed suggests in his book ‘Black Box Thinking’, learning from our mistakes and mistakes of others is crucial if we want to improve. Cyber security is no exception.

Software now underpins almost every field of modern life, including healthcare, aviation, transportation, space, infrastructure and beyond. One would hope software that we write for these industries is as safe and secure as possible.

If we develop comprehensive tests and perform security checks in the CI/CD pipeline in line with the DevSecOps approach, we don’t have to wait for pentesters, bounty hunters or worse to show up and exploit our products and platforms.

Security should be an aspect of quality and integrated in the overall software assurance process. That’s why pair programming and mob programming often contribute to delivering more secure code – we can get feedback and catch errors sooner. However, developers need to be familiar with secure coding practices to take advantage of security benefits of such real-time peer-review.

A more proactive approach to preventing vulnerabilities is threat modelling. It’s akin to asking ‘what can go wrong?’ before a single line of code is even written.

There are useful frameworks like STRIDE that help structure the threat modelling process. At its core, however, it’s about understanding what you are building, what risks exists and what you are going to do about them.

A pre-mortem technique is a variation on this theme where the team begins with imagining a security incident occurring and works back to potential flaws that might’ve led to it.

At the end of the day, to write secure code it’s essential to learn to think as an attacker. How can this be exploited? Step off the happy path and ship quality code, someone’s life might depend on it.